Over the past year, the term Agentic AI has been applied to an increasingly broad range of systems - from simple retrieval-augmented chatbots to multi-agent orchestration frameworks. In practice, nearly every system built around a Large Language Model (LLM) today is called an “agent,” whether it has a single generation step or coordinates dozens of reasoning loops.

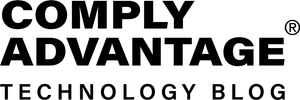

This inflation of the term hides a more useful idea: agentic behavior exists on a spectrum of autonomy. What differentiates one system from another is not whether it “is” or “is not” an agent - it’s how much autonomy the system is given over its own control flow, reasoning, and use of external capabilities.

At one end of the spectrum are scripted LLM/AI workflows - less autonomous, predictable, auditable, and deterministic.

Example: A customer service bot that is scripted to always search the FAQ database first, then always use the top 3 results to formulate an answer. It cannot decide to do anything else.

At the other end are adaptive, goal-driven systems that reason, plan, and act with minimal external orchestration.

Example: An autonomous research agent. Given the goal "Analyze market trends for AI," it can decide to search the web, identify a key website, write a new Python script to scrape data from that site, and then summarize its findings.

Most production systems live somewhere in between, balancing adaptability with predictability.

Understanding that continuum (and being deliberate about where a given system should sit) is the foundation of good agentic design. This report aims to clarify that spectrum, focusing on the practical dimensions of autonomy and how core capabilities (reasoning, retrieval, tool use, memory, reflection) combine to create different behavioral profiles.

1 - Core Capabilities of Agentic Systems

Every LLM-based system, from a single prompt to a complex agent team, is built on a set of common capabilities. These are not architectures - they are composable building blocks that, when combined, define how an agent perceives, reasons, and acts. When designing agentic systems, the autonomy of an agent is defined by the autonomy given to each of these capabilities.

1.1 - Reasoning and Planning

Reasoning is the foundation of autonomy. It is the model’s ability to interpret ambiguous goals, decompose them into intermediate steps, and plan a course of action.

In simple systems, reasoning happens once - inside a single prompt. In more autonomous designs, reasoning becomes iterative. The system plans, executes, observes results, and revises its plan.

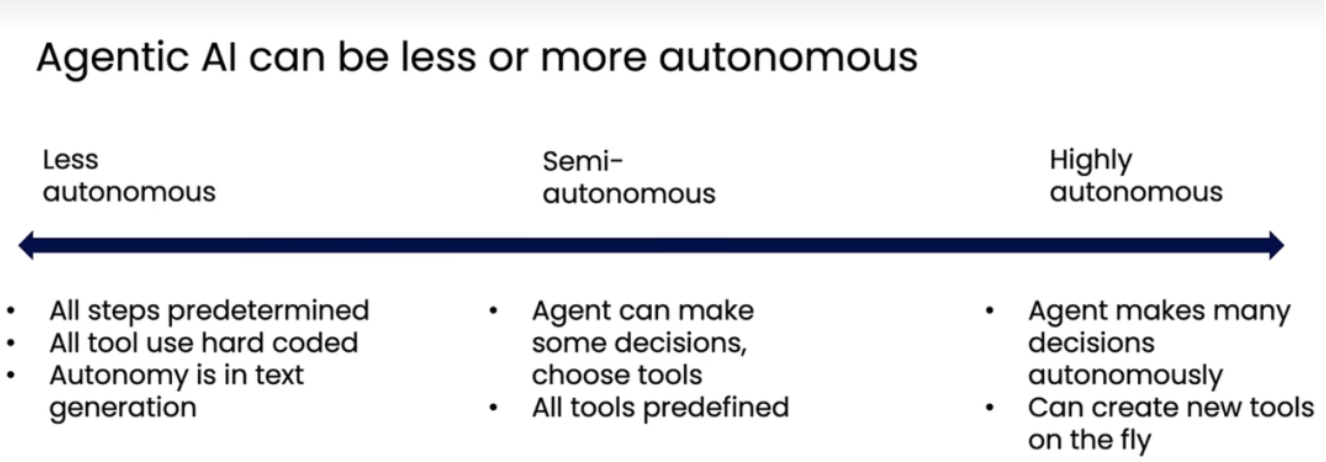

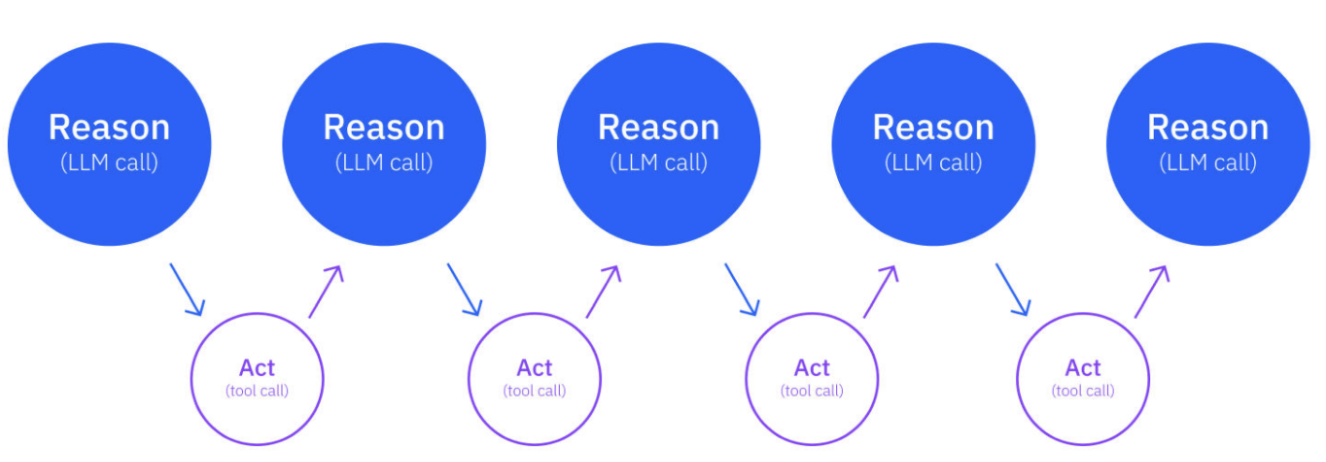

Prompting patterns like ReAct (Reason + Act) and ReWOO (Reasoning Without Observation) express different control strategies:

- ReAct: The model reasons about what to do next, acts via a tool or API, observes the result, and repeats.

- ReWOO: The model plans the full sequence upfront, executes, and synthesizes results - efficient when the path is known in advance.

1.2 - Retrieval (RAG)

Retrieval-Augmented Generation (RAG) is often treated as a system design pattern, but it’s better understood as a capability that can operate at different levels of autonomy.

At the low end, retrieval is scripted:

Example: The system always fetches the top 3 documents before calling the model.

At higher autonomy, retrieval becomes adaptive:

Example: The model itself decides when more information is needed, formulates the query, and performs iterative lookups until it has everything it needs.

Both systems use retrieval - but only one delegates control of retrieval to the model. That control decision determines the autonomy, not the presence of a vector store or retriever tool.

1.3 - Tool Use and Environment Interaction

Tool use expands an agent’s reach beyond text generation - from database queries and code execution to API calls and simulations. The autonomy question is: who decides which tools to use, and when?

In a low-autonomy setup, tools are called by a predefined pipeline. In a more autonomous system, the model dynamically selects tools, reasons about when to invoke them, and interprets their results.

1.4 - State and Memory

Memory gives continuity - the ability to recall context across steps or sessions. Two forms matter in practice:

- Short-term memory: State persisted within a single reasoning loop (e.g., conversation history, intermediate thoughts).

- Long-term memory: Durable storage of reasoning traces, facts, or strategies that inform future actions.

Memory introduces autonomy by allowing the system to adapt based on past experience. A “learning agent” that adjusts its future actions based on historical success is more autonomous than one that starts from scratch every time.

However, persistent memory also introduces risk:

- It increases unpredictability (emergent behaviors from accumulated data).

- It demands governance (what gets stored, when, and why).

1.5 - Reflection and Self-Evaluation

Reflection turns an LLM from a reactive generator into an iterative problem-solver. It’s the process of evaluating one’s own output, identifying weaknesses, and retrying with improvements.

The Reflection pattern formalizes this through a feedback loop:

Actor produces output → Critic evaluates → Actor revises.

Reflection adds autonomy by allowing the system to self-correct without external supervision. In practice, reflection can take lighter forms too, such as having the model recheck assumptions or summarize confidence before producing a final output.

Use it selectively:

- Valuable for high-stakes outputs where accuracy matters.

- Overhead and latency increase sharply with each iteration.

2 - The Dimensions of Autonomy

Agentic autonomy isn’t a single switch - it’s multi-dimensional. Each dimension describes how much decision-making power has shifted from the human or orchestrator to the model itself.

No single dimension defines autonomy alone; they interact. For example, you can have a system with internal control flow but no long-term memory (a ReAct agent), or one with strong self-evaluation but external orchestration (Reflexion under human review).

In practice, each capability increases autonomy along one or more of these axes.

- RAG affects tool interaction and control flow.

- Reflection increases self-evaluation.

- Memory extends statefulness.

Understanding these interactions enables you to design systems that are intentionally agentic, not accidentally complex.

3 - Selecting the Right Level of Autonomy

In summary, autonomy is powerful - but costly. Each step toward internalized control flow reduces predictability and increases operational burden. As agents become more autonomous, several forms of governance become harder:

- Auditability: Understanding why the agent made a specific decision becomes significantly more difficult as more reasoning happens inside the model rather than in an external pipeline.

- Security: Greater agency introduces new risks. An agent that can dynamically choose tools, interact with new data sources, or even create its own tools on the fly has a larger attack surface. This increased agency can lead to worse security outcomes if not properly bounded, as the agent may be manipulated or misuse its capabilities.

- Control: Agents with long-term memory or self-modifying behavior can drift over time, becoming less predictable and harder to manage.

The right amount of autonomy depends entirely on your product goals, operational constraints, and risk tolerance.

Design Principle: Minimum Viable Autonomy

Start with the simplest system that can solve the problem. Increase autonomy only when adaptability provides measurable value.

Assessment factors

- Task Uncertainty - How predictable are inputs and outcomes?

- High uncertainty → autonomy adds value.

- Low uncertainty → restricted workflows are safer and faster.

- Cost and Latency Sensitivity - Each reasoning loop adds tokens and seconds.

- Reflective or multi-tool agents are expensive to operate.

- Auditability and Governance - Can you explain why a system acted as it did?

- External orchestration = full traceability.

- High autonomy = emergent reasoning, harder to debug.

- Safety and Error Recovery - Does the system have bounded failure modes?

- Define explicit stop conditions and evaluation criteria for autonomous loops.